20 May

Tuning Node.js and V8 settings to unlock 2x performance and efficiency

Node.js has emerged as a go-to server-side runtime environment for building high-performance web applications and is ranked among the top 3 popular languages in Kubernetes environments. At the heart of Node.js lies V8, Google’s high-performance JavaScript engine. V8 provides 100s

22 Nov

Insights from Kubecon 2023 – K8s for GenAI, Efficiency, and Sustainability

Last week, I attended Kubecon 2023 in Chicago, a conference I prioritize as it’s a unique opportunity to learn what the cloud-native community is up to and where it’s headed. I came home with some new insights about the direction of

11 Nov

Kubecon 2022 review: Application-aware Kubernetes comes to town!

Last month the Akamas team had the pleasure to join 7000+ attendees as Silver sponsor of the KubeCon + CloudNativeCon North America 2022 conference organized by the Cloud Native Foundation (CNCF) in Detroit. What a great time we had, meeting

21 Oct

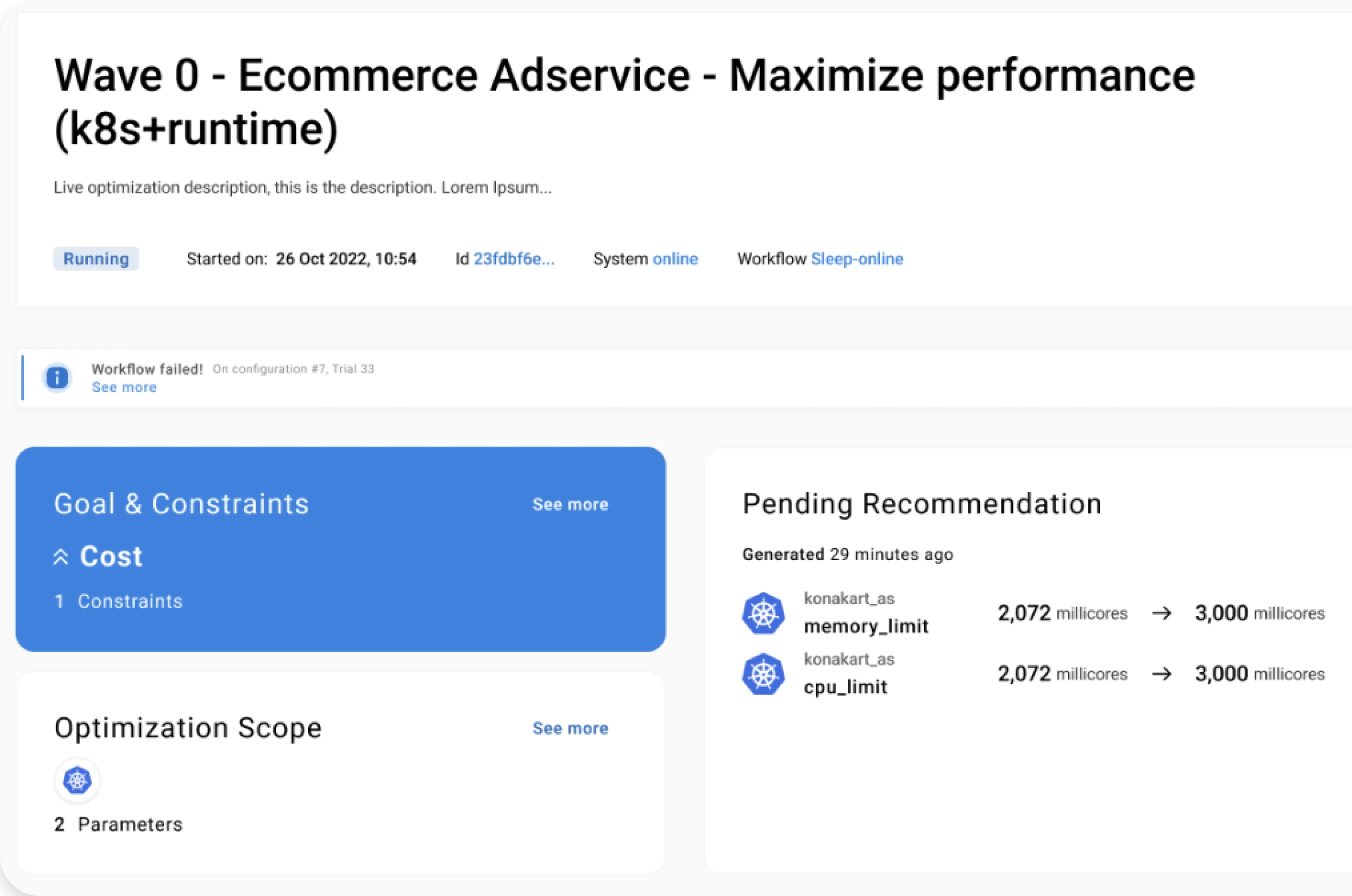

Is AI-powered autonomous optimization the answer to the Kubernetes dilemma?

Notice: An abridged version of this interview has been published as Akamas contributed content by TFIR for the KubeCon+CloudNativeCon NA 2022 in Detroit, October 24-28 Stefano Doni, CTO at Kubernetes optimization company Akamas, and long-time CMG contributor and Best Paper

18 Oct

Accelerate digital innovation with Self-service Developer Portals and AI-powered Kubernetes optimiza...

Today developers are often spending more time on managing Kubernetes than focusing on developing applications running on Kubernetes. This situation is exacerbated by the shortage of Kubernetes skills and by the complexity of developing and delivering well-tuned applications in Kubernetes

07 Jun

Squeezing more (orange) juice from Kubernetes

Our notes from KubeCon + CloudNativeCon in Valencia KubeCon + CloudNativeCon Europe 2022 – what a fantastic event! I’m sure my feeling is shared by many among the 7,000 in-person attendees and 10,000 that followed from home. We had the pleasure

14 Apr

Chaos Engineering & Autonomous Optimization combined to maximize resilience to failure

This blog is co-authored by Kyle McMeekin, Head of Channel at Gremlin. Today’s enterprises are struggling to cope with the complexities of their environments, technologies, and applications. On top of these challenges, they face faster release rates, and the need

17 Feb

Beware of Kubernetes autoscaling if you want your services to be reliable and cost-efficient

Many companies delivering services based on applications running on cloud face much higher costs than expected. The problem is that over-provisioning is too often the approach taken to minimize risks, in particular when development and release cycles are getting shorter

14 Jan

Optimizing your Kubernetes clusters without breaking the bank

Organizations across the world are fast adopting Kubernetes. That is because Kubernetes provides several benefits from a performance perspective. Its ability to densely schedule containers into the underlying machines translates to low infrastructure costs. It prevents a runaway container from impacting

13 Oct

How I learned to love Kubernetes with resource and cost optimization

The benefits of Kubernetes from a performance perspective are undisputable. Let’s just consider the efficiency provided by Kubernetes, thanks to its ability to densely schedule containers into the underlying machines, which translates to low infrastructure costs. Or the mechanisms available to isolate

10 Sep

Safely cut cloud bills with no impact on service performance – is it a dream?

Several cloud cost optimization solutions are today available both by Cloud Providers, such as AWS Compute Optimizer or Google machine type recommendations, and by specialized COTS vendors. These tools may help you choosing the right cloud instance and volume sizes, allocating resources in

03 Sep

How to optimize Spark jobs performance and lower AWS costs

Big data applications often offer relevant opportunities for gains both in terms of performance and of cost reduction. Typically, the underlying infrastructure – whether on-premise or on cloud – is both inefficient and over-provisioned to ensure a good performance vs

11 Aug

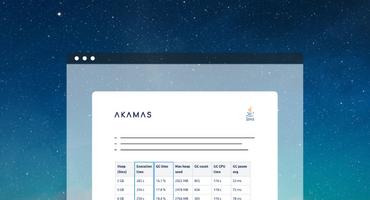

Make sure to choose the right GC for your Java application to achieve maximum performance

The Java platform continues to be developed and improved over time. The OpenJDK community has been quite active in improving the performance of the JVM and the garbage collector (GC): new GCs are being developed and existing ones are constantly

16 Jul

Java application throughput, response time, and cost: how to optimize

Developers and system owners usually take for granted that there are some intrinsic tradeoffs in the Java design. For example, it is commonly accepted that if you aim at reducing resource usage (e.g. CPU), you must accept some performance degradation.