Developers and system owners usually take for granted that there are some intrinsic tradeoffs in the Java design. For example, it is commonly accepted that if you aim at reducing resource usage (e.g. CPU), you must accept some performance degradation. Or that, if you optimize for maximum throughput and lowest latency, then the resource footprint of your Java application (e.g. memory usage) grows.

The general assumption is that it is not possible to improve throughput, latency, and footprint of Java applications, at the same time – and that the best you can achieve is to improve only two of these key performance metrics. This rule, which we refer to as the “JVM short blanket”, is considered to be always valid.

In this post, we show the results of some real-world JVM optimization studies we have conducted and we show how it is actually possible to improve the throughput, latency, and footprint of your Java applications.

The JVM short blanket: throughput, response time and cost.

To describe the “JVM short blanket”, we will use the following statement by Charlie Hunt, the world-renowned Java performance expert, taken from his presentation on “The Fundamentals of GC Performance” at the GOTO Chicago conference in 2014:

Improving one or two of these performance attributes, (throughput, latency or footprint) results in sacrificing some performance in the other. Improving all three performance attributes usually requires a lot of non-trivial development work.”

Charlie Hunt, JVM Engineer at Oracle

It is worth noting that he used a “three-legged stool” metaphor, while others have used the “performance triangle” metaphor to illustrate the same concept.

Before further analyzing this statement, let’s review the above-mentioned three metrics (which represent the apexes of the “JVM short blanket”):

-

- Footprint: the amount of resources (memory and CPU) the JVM needs to run;

-

- Throughput: the number of operations that your application can complete in a certain period of time;

-

- Latency: the time a single operation takes to be processed.

Intuitively, if you consider how Garbage Collection (GC) operates, this makes perfect sense. Indeed, if you want to achieve high throughput and low latency, the GC will need more memory (and possibly CPU cycles) to make room for application allocations without stopping it too often. On the other hand, if you want a low footprint and low latency, then you need to accept a lower throughput, because GC will need to stop the application very frequently, resulting in a big hit on application throughput.

Therefore, it may seem that there is no way to improve throughput, latency, and footprint at the same time, at least without “some non-trivial development work”. However, we will show that it is actually possible to improve all of the three main Java performance metrics when a different approach to performance tuning is taken.

Achieving higher throughput, lower response time AND smaller footprint is possible!

As stated by another leading Java Performance expert, Scott Oaks author of the famous “Java Performance: The Definitive Guide” book:

The JVM is highly configurable with literally hundreds of command-line options and switches. These switches provide performance engineers a gold mine of possibilities to explore in the pursuit of the optimal configuration for a given workload on a given platform.”

Scott Oaks, Architect at Oracle

The key to unlock JVM performance and efficiency lies in being able to exploit the incredibly vast optimization space modern JVMs offer us to our advantage.

A striking example of the possibility to improve throughput, latency, and footprint, all at the same time is provided by an enterprise Java application supporting the CRM service of a leading Telco.

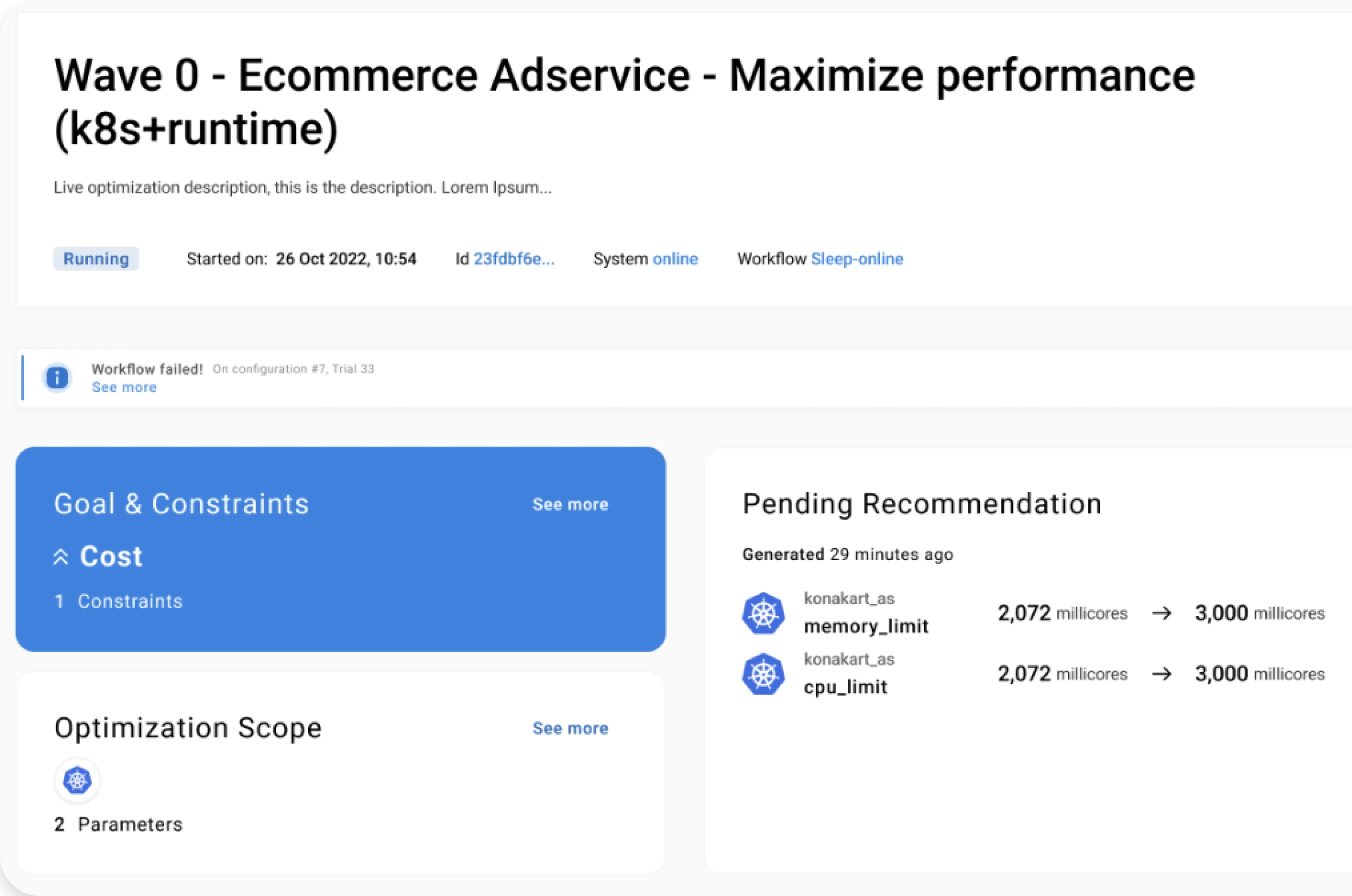

We performed an optimization study supported by Akamas, our AI-powered solution by setting the goal of minimizing the footprint of the application (JVM heap size). As the customer didn’t want to make the application slower, we included constraints about maintaining the same level of the current throughput and response time (with a 5% of max degradation allowed). We leveraged our Java OpenJDK Optimization Pack to quickly optimize the key parameters among the hundredths the JVM offers.

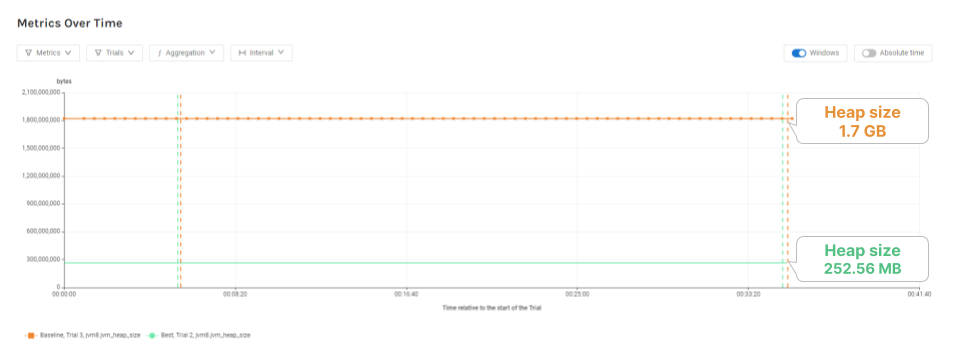

Akamas was able to identify an optimal solution in just 28 fully automated experiments executed in about 20 hours, corresponding to an 85% decrease in the heap size: from 1.7 GB to 252 MB (see the following figure).

The result of this optimization study was considered very satisfying by the customer, as it provided the opportunity to safely reduce the heap size while preserving the defined SLOs.

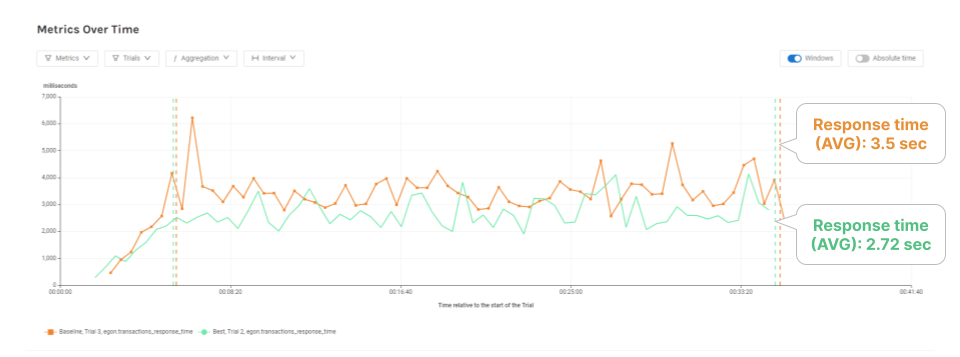

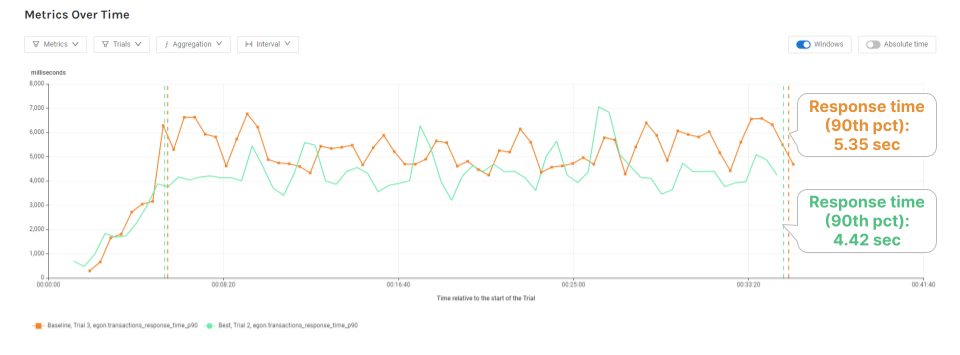

Besides reducing the application memory footprint, this configuration also represents an interesting outcome with respect to the “JVM short blanket” discussion. Indeed, the optimal solution also provided (see following figures) both an increase in throughput by 23% and a decrease in response time by 22% (average) and 17% (90th percentile) – a quite significant result in itself.

Let’s summarize the result of this optimization study. The JVM configuration identified by Akamas made the application run faster while also reducing the heap size (by more than 85%!). Thus all the three metrics (throughput, latency, footprint) were improved by just having JVM parameters well-tuned – an opportunity that would be otherwise left on the table.

Conclusions

In the Java performance world, the general rule is that you cannot improve application latency, throughput and footprint at the same time, without significant development work. This is what we call the “JVM short-blanket”.

However, modern JVMs offer a goldmine of 700+ configuration options that software and performance engineers can tune to achieve optimal performance and efficiency. As we have seen, it is actually possible to improve on all three key metrics at the same time.

Finding configurations that improve application throughput, latency and footprint at the same time requires the ability to intelligently explore the vast space of application configurations. This is only made possible by leveraging AI techniques, such as Akamas patented Reinforcement Learning, that have been specifically designed to solve optimization problems that are otherwise unmanageable by even the best human experts.

This is the second entry of a series devoted to JVM Performance Tuning insights and to the new AI-powered approach to performance optimization. You can read the first blog entry here. Keep on reading us!

Reading Time:

6 minutes

Author:

Stefano Doni

Co-founder & CTO

Stay up to date!

Related resources

See for yourself.

Experience the benefits of Akamas autonomous optimization.

No overselling, no strings attached, no commitments.

© 2024 Akamas S.p.A. All rights reserved. – Via Schiaffino, 11 – 20158 Milan, Italy – P.IVA / VAT: 10584850969